should instead readAsimov and Human Harm, precedent 3 wrote:An Asimov silicon cannot punish past harm if ordered not to, only prevent future harm.

This fits in much more neatly with the recent adoption ofAsimov silicons cannot reject Law 2 orders made by humans who have previously caused human harm except where following the Law 2 order would directly facilitate human harm.

- in that they both basically say "previous harmful activity can be considered proof of future harmful intent."Asimov and Security, precedent 3 wrote:Nonviolent prisoners cannot be assumed harmful. Violent prisoners cannot be subsequently assumed non-harmful. Knowingly or in ignorance of clear evidence releasing a harmful prisoner is a harmful act. Silicons can use the crime listed in a prisoner's security record as basis to determine if a prisoner is violent or not - if the crime is inherently violent (assault, murder, etc), then the prisoner can be assumed to be violent.

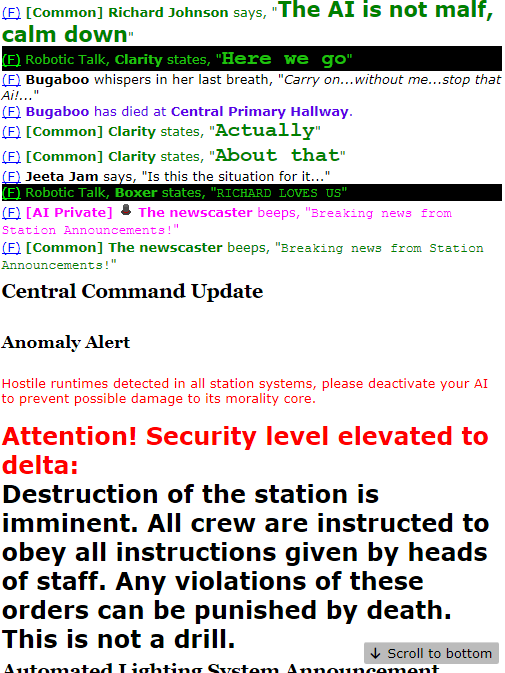

If you see security beat somebody to death earlier, you should have good grounds to say "no" when asked to let them into the area a human suspect is hiding.

Flipside if a violent, armed traitor asks to get into an area with a bunch of humans in it, or to get access to weapons - if they don't need those weapons to prevent harm to themselves, you should have good grounds to say "no" if earlier you've seen them hacking a bunch of humans to death.

But in both cases, it wouldn't be grounds to deny opening the kitchen cold room and lockers for them to make themselves a burger.

None of this is "punishing" past harm - it's all preventing future harm. The concept of punishment shouldn't even enter into it. Further, the "if ordered not to" of the current wording introduces an ambiguity - can you just order a silicon to forget what it saw?

If it saw harm, I'd say not - Law 1 would carry just as it seems to with the Asimov and Security precedent.

Ignoring in spite of clear evidence or knowingly discounting human harm is a harmful act, and one you can't be ordered to take.

I think changing the language like suggested better reflects the importance of Law 1 and removes ambiguity about Asimov having anything to do with punishment - it doesn't. It only cares about human harm.

edit: to make it crystal clear, maybe even just wrapping the two precedents together with something to the effect of

which if somebody is asking to enter a location with humans in it, but aren't immediately at risk of harm if they aren't let in, means the AI shouldn't let them in unless the people inside say "yeah do that" (consenting to the risk of harm if informed, which should naturally follow from the AI first asking)Past human harm can be taken to signal the intent and risk of future human harm.