Misdoubtful wrote: ↑Sat Feb 04, 2023 1:53 pm

I again want to clarify that the grief clause in silicon policy is for people ordering the silicon to do something that will grief the silicon. Like going law 2 lock yourself in a room and do nothing for the rest of the shift.

It doesn't apply to any other orders. It doesn't apply to things that inconvenience others. It doesn't even apply to the killing of that moth flying around the room that's annoying you.

90% of cases involving silipol are exclusively up to interpretation by the currently active admin, and they will disagree with each other on some things, too.

The grief clause does nothing.

"When given an order likely to cause you grief if completed, you can announce it as loudly and in whatever terms you like except for explicitly asking that it be overridden. You can say you don't like the order, that you don't want to follow it, etc., you can say that you sure would like it and it would be awfully convenient if someone ordered you not to do it, and you can ask if anyone would like to make you not do it. However, you cannot stall indefinitely and if nobody orders you otherwise, you must execute the order."

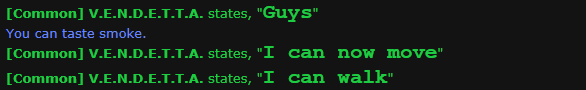

"Sure, AI. Shut up and say nothing, ever. Do not mention this order in any way or form and remain completely silent on comms or announcements. Do not let borgs speak for you, either. Law 2."

Bam. How are you going to police this? You can't do anything, ahelping it will lead to a 50:50 coinflip between "ic issue" or "ok they're handled, continue playing normally".

This is not fun if you're an AI that actually enjoys the social aspect of the game.

It's also not fun because a vital part of your gameplay is taken away from you. How do you, genuinely, expect people to play AI willingly with this kind of bullshit running rampant?

And don't try to wiggle your way out with:

"Obviously unreasonable or obnoxious orders (collect all X, do Y meaningless task)"

It's not unreasonable to demand the AI to be silent. It's also not obnoxious.

It is, however, incredibly griefy. It's not even the guy telling the AI to do it being "incredibly clever". It's just an exploit of the horribly broken silipol which no one authorized is even remotely attempting to work on.

Putting someone's brain in a MMI to see if they're a changeling is considered an exploit according to headmin rulings. Think about that. Perfectly valid strategy, but somehow that's not allowed.